Much of what we think we know about how Mythos model works is assumption, and very little of it is verified fact. That is the short answer to a series of questions that have been swirling since the limited release of Anthropic’s powerful new product. For now, Mythos is being presented publicly as a “black box.”

We know what it can do. We do not know how it was built, what its internal architecture looks like, or precisely which techniques were used to train it.

The technology remains commercially unavailable to the general public, and along with it, the technical details of the model’s architecture stay hidden. While its creators are managing the unprecedented capabilities of their “mythical” tool on multiple fronts, the scientific community appears divided on whether Mythos represents a genuine rupture with everything that came before it, or simply the next expected step in the hard-to-reverse ascent of artificial intelligence.

Where Mythos excels

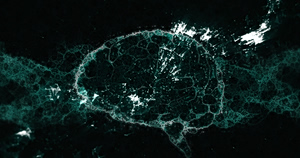

Mythos appears to possess more sophisticated forms of reasoning compared to existing AI models. Anthropic describes it as a general-purpose language model, but one capable of detecting errors in code with exceptional precision. As the company’s website states, the Claude Mythos Preview makes one thing clear: AI models have reached a level of programming capability that allows them to surpass nearly all humans, save for the most highly specialized, in identifying and exploiting software vulnerabilities.

It is therefore being positioned as a powerful support tool in the field of cybersecurity, capable of recognizing vulnerabilities in major commercial and government digital systems. The relevant assessments are based primarily on an extensive technical paper published by Anthropic on April 7, which presents the model’s initial impressive capabilities across hypothetical cybersecurity attack scenarios.

The potential danger

Beyond detecting functional errors in digital systems, Claude Mythos is also reported to have an additional capability: exploiting the security gaps it finds. Mythos can identify a flaw in a piece of code, assess its implications, and map out a sequence of hypothetical actions that could lead to its exploitation. In short, it is capable of charting potential breach and attack pathways.

Anthropic warns that the consequences could be serious for the economy and public safety if the model were to fall into the wrong hands. To evaluate its capabilities in real-world cybersecurity scenarios under strict supervision, the company is making the model available on a limited basis to selected partners through Project Glasswing — a research consortium focused on identifying and addressing security vulnerabilities. Among those partners are technology giants including Microsoft and Google.

The need for vigilance is acknowledged by the international scientific community as well, though there is no consensus on the scale of the risk. Many view the emergence of such advanced systems as a natural evolution of artificial intelligence rather than a step-change threat.

The debate remains open as to whether Mythos constitutes a direct and uncontrollable threat in practice, or whether some of the concern is being overstated given the technical constraints and existing safety measures already in place. What is certain is that the trajectory of these developments will continue to command all of our attention.