A Los Angeles jury on Wednesday found Meta and Alphabet’s Google negligent for designing social media platforms that harmed children, awarding $6 million in damages in what could become a landmark case for the tech industry. Meta was ordered to pay $4.2 million, and Google $1.8 million, though experts note the amounts are minor relative to the companies’ vast resources.

The case, which centers on a 20-year-old woman known in court as Kaley, focused not on the content of posts but on the platforms’ design features, such as Instagram’s “infinite scroll” and YouTube’s autoplay recommendations, which the plaintiff argued encouraged addictive behavior from a young age.

Lawyer Mark Lanier, of the plaintiff Kaley G.M., speaks with the media outside the court after the jury found Meta and Google liable in a key test case accusing Meta and Google’s YouTube of harming children’s mental health through addictive social media platforms, in Los Angeles, California, U.S., March 25, 2026. REUTERS/Mike Blake

Bellwether trial for child safety lawsuits

Legal analysts describe the verdict as a bellwether, potentially influencing thousands of similar lawsuits consolidated in California state courts. “Accountability has arrived,” said the plaintiff’s lead attorney, emphasizing the jury’s recognition of tech companies’ responsibility to protect young users.

Meta and Google plan to appeal the decision. U.S. law generally shields social media firms from liability for platform content, but this case highlights how design choices could expose companies to legal risk. Analysts warn the ruling may prompt tech giants to implement stronger safeguards for minors, potentially affecting growth and engagement metrics.

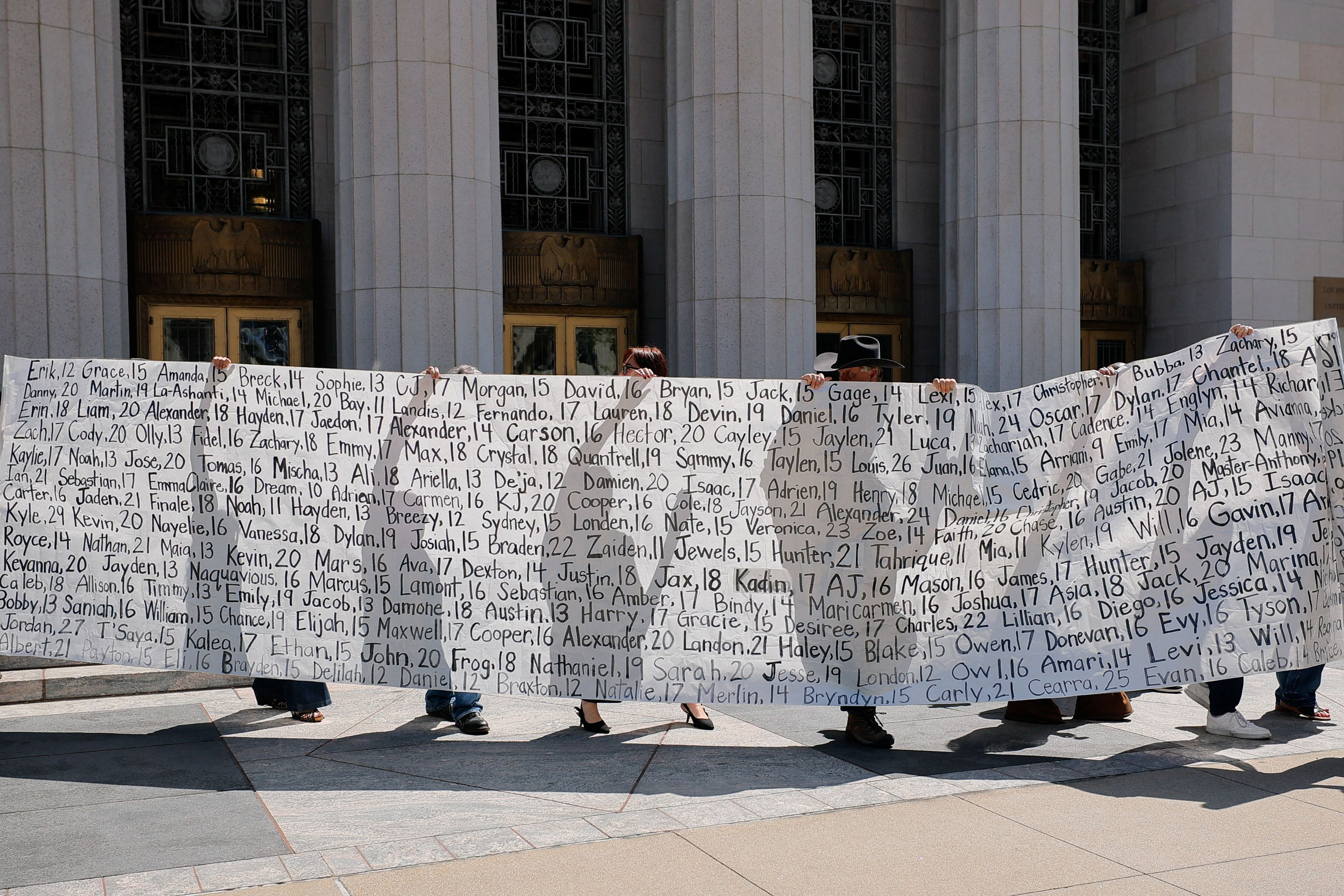

Parents who say they have lost their children due to social media hold up a banner with the names of the children outside the court after the jury found Meta and Google liable in a key test case accusing Meta and Google’s YouTube of harming children’s mental health through addictive social media platforms, in Los Angeles, California, U.S., March 25, 2026. REUTERS/Mike Blake

Broader legislative and legal context

Mounting criticism over child and teen safety on social media has shifted debates from Congress to courts and state legislatures. Last year, at least 20 U.S. states passed laws regulating children’s access to social media, including age verification and limitations on device use in schools. Tech trade group NetChoice is challenging some of these requirements in court.

Senators Marsha Blackburn and Richard Blumenthal called for federal legislation to ensure platforms are designed with child safety in mind. Additional trials are set in Los Angeles and Oakland this year, involving Instagram, YouTube, TikTok, and Snapchat, signaling a growing legal spotlight on social media companies.

Trial evidence and company defenses

During the trial, jurors reviewed internal documents showing Meta and Google targeted younger audiences to boost engagement. Executives, including Meta CEO Mark Zuckerberg, testified in defense, citing the importance of user expression and arguing that personal factors contributed to the plaintiff’s mental health struggles.